Linguistic Testing for Arabic Localization: A Practical QA Guide

Linguistic review tells you if the Arabic is correct. Linguistic testing tells you if it works. Here is the QA methodology that catches what review misses — before your Arabic product ships.

Workshop: Localization for Web 3.0 and AI Applications · Article 3 of 4

Note on this article: The Arabic version — accessible via the orange button at the bottom of the page — is written for Arabic translators and linguists conducting the testing themselves. This English version addresses the localization manager or QA lead who is designing the testing process, allocating resources, and reviewing what their vendor delivers. Same methodology, different vantage point.

In Article 1 we covered terminology: building the term register that makes consistency possible before a single string is translated. In Article 2 we covered the three constraints of Arabic UI localization: space, layout mirroring, and cultural register.

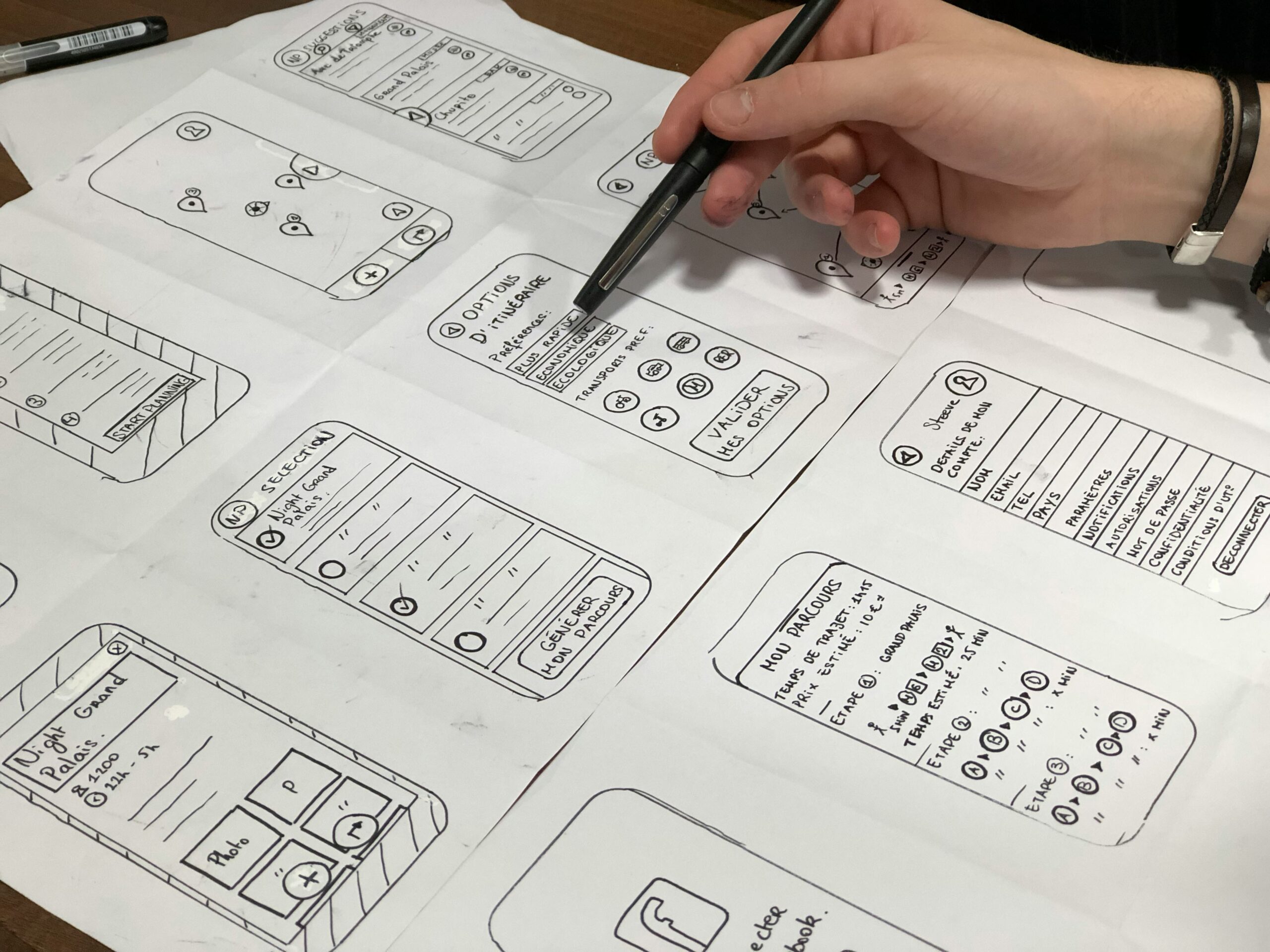

Both of those stages happen on paper — in spreadsheets, term registers, and style guides. This article covers the stage where translation becomes experience: linguistic testing.

Most localization projects skip linguistic testing entirely, or fold it into linguistic review as though they were the same thing — and they are not. Linguistic review asks: “Is this text correct?” Linguistic testing asks: “Does this text work in this context?” The difference is not semantic. It is the difference between an excellent translation on a spreadsheet and a disaster in a shipped product.

Why Review Alone Is Not Enough

Consider this scenario: a professional Arabic translator has reviewed every string in the localization file. Every sentence is grammatically correct. Every term is consistent with the register. Every choice is justified. The file goes to the developer, gets integrated into the application, the product ships — and the first Arabic-speaking user discovers that the “Next” button text overflows its container, that the most common error message appears in a state that makes it unreadable, and that the page title in the browser tab is truncated at the seventh character.

The translator did not make an error. The reviewer did not make an error. Testing was skipped.

Linguistic testing has a natural analog in the software development cycle: developers test code, UX designers test experience, QA engineers test functionality. But testing whether the translation works in its actual environment falls in a gray zone between these roles — and it frequently belongs to no one.

A correct translation appearing in the wrong context is a localization defect. Finding it after launch costs twenty times more to fix than finding it in testing.

Five Arabic Localization Defect Types That Review Does Not Catch

Linguistic testing looks for specific categories of defects that do not appear in text-file review. These are the five most common in Arabic localization projects:

1. Text Overflow: The translated string is longer than the container it was designed for. A button that holds “Submit” can hold «إرسال» — that is fine. But when “Continue to payment” becomes «المتابعة إلى صفحة الدفع» in a button designed for ten English characters, the problem is visible in the product and invisible in the string file. This is the most common defect type in Arabic UI localization and the one most reliably missed by text-only review.

2. Truncation: The system cuts the text at a fixed character limit, with or without an ellipsis. «إعدادات الحساب الشخصي» becomes «إعدادات الحسا…» in a narrow navigation bar. Worse than arbitrary truncation is truncation that changes the meaning — which happens more often in Arabic than in European languages because Arabic words are written in connected script, and cutting a word mid-glyph produces something unrecognizable rather than merely incomplete.

3. Bidirectional Rendering Issues (Bidi): Arabic text renders incorrectly when it mixes with LTR elements — numbers, URLs, technical terms, brand names. A string like «رصيدك هو $٥٠٠ في حساب Premium» can have its visual word order scrambled if each element is not individually marked with the correct directionality attribute. This is not a translator error — but it is a defect the linguistic tester must identify and report.

4. Dynamic State Errors: Text that looks correct in its default state breaks in edge-case states. “Hello, [username]” works fine when the username is short. When the username is thirty characters long, or contains Latin characters, the directionality of the entire string may collapse. Linguistic testing covers edge cases, not just the ideal state.

5. Pluralization and Inflection Errors: English has two grammatical numbers: singular and plural. Arabic has five: singular, dual, plural of a few (3–10), plural of many (11+), and in some cases a non-declinable plural. A system built on the “1 item / N items” model will produce «١ عنصر / ٢ عناصر / ٥ عناصر» — but «٢ عناصر» is grammatically wrong in Arabic (the correct form is «عنصران», the dual). This defect is invisible in static string review and appears only in dynamic testing.

The Four-Round Testing Methodology

Professional linguistic testing does not happen in a single pass. It runs across four rounds of increasing depth:

Round 1 — Static Display Test: The tester reviews every screen in its default state, looking for text overflow, truncation, and text direction errors in static strings. This round catches 60–70% of defects and is the fastest to execute. It is a prerequisite for the rounds that follow — no point testing interactions in a UI where the static display is broken.

Round 2 — Interaction Test: The tester executes every primary user flow: registration, login, completing a purchase, changing settings, triggering an error message. In each flow, the focus is on strings as they appear in actual context — not what was written in the file, but what renders on screen at each step. Many strings behave correctly in isolation and incorrectly in sequence.

Round 3 — Edge Case Test: Test data is designed to break assumptions: a one-character username, a thirty-character username, a balance with three zeros, a zero balance, a date at the end of the century, a product name containing both Arabic and Latin characters. This round surfaces pluralization errors, Bidi issues that only appear with specific data combinations, and layout failures that only occur at extreme input lengths.

Round 4 — Full User Journey Test: The tester runs a complete session from a real user’s perspective, without analytical stops. The goal here is not to find specific defects but to identify what feels “off” or “unnatural” even when it is not technically wrong. Register inconsistencies, awkward phrasing chains, and cultural misfits that pass individual string review accumulate into a noticeable overall impression — and Round 4 is what surfaces that impression.

Testing Tools: What You Actually Use

Linguistic testing for most projects does not require expensive specialized tooling. The effective toolkit is straightforward:

Manual checklist: A document listing every screen, every interaction flow, and every dynamic state to be tested, with a column for each of the five defect types above. The tester completes it screen by screen. Simple, effective, and repeatable across projects.

Pseudo-localization: A technique used before translation is complete — it replaces English source strings with “fake” versions using extended characters (for example, converting “Edit Profile” to “[!!! Éďîţ Þŕôƒîļé !!!]”) to surface space and container problems before real translations are inserted. Tools like i18n-ally and Globalese provide this capability. It is most useful for the development team at an early stage — before localization even begins — to identify containers that cannot accommodate expansion without engineering changes. Running pseudo-localization before engaging translators catches structural problems at their cheapest fix point.

Screen recording with verbal commentary: The tester records their session and narrates observations in real time. This documentation method is faster than writing a detailed written report and allows the development team to see each defect in its actual context rather than reading a description of it.

Standardized test data sets: A pre-prepared set of test inputs covering edge cases — one-character name, thirty-character name, zero balance, three-digit balance, century-end date — used consistently across projects. Note that this overlaps with functional QA testing: a dataset designed to stress the system also stresses the localization. A thirty-character German name reveals container overflow; a zero-balance scenario reveals whether the string «٠ ريال» or «ريالات» handles the zero case correctly. Reusing the same dataset across projects saves preparation time and guarantees consistent coverage.

The Linguistic Report: Documenting What You Find

An undocumented defect is an unsolved defect, even if you found it. A well-structured linguistic report includes five elements for each issue:

- Location: The exact screen, flow, and component («Payment screen > Confirm button»)

- Defect type: One of the five categories above

- Current state: What actually appears on screen

- Problem description: Specific and measurable («Text overflows container by 8 characters at 375px viewport width»)

- Proposed resolution: The alternative string or the engineering change required

Add a priority column — High / Medium / Low — to help the development team sequence fixes. A defect that blocks completion of a core user flow is High. A layout imperfection on a rarely accessed screen is Low. Pluralization and inflection errors — while linguistically incorrect — are typically Low or Medium priority unless the product explicitly targets formal or educational contexts where grammatical precision is expected by users.

Six Arabic-Specific Test Points

Beyond the standard testing methodology, six areas require explicit attention in Arabic localization QA:

1. Font weight testing: Arabic text in Regular weight may render acceptably while the same text in Light weight becomes illegible on certain typefaces. Verify every font weight in use across the product — not just the default body weight.

2. Tashkeel (diacritics) testing: Some products incorporate diacritically marked Arabic text in onboarding or educational contexts. Diacritics increase the vertical height of text — containers that were sized for unvoweled Arabic may overflow when voweled text is introduced.

3. Mixed numeral testing: A string containing Arabic-Indic numerals alongside a single Western Arabic numeral — because that numeral arrived from a database field in a different format — produces visual inconsistency. Test the sources of dynamically generated numbers, not only static strings.

4. Viewport size range testing: What renders correctly at 390px may break at 320px. Older devices remain common in several Arab markets; test across the full device range your product targets, not only current flagship specifications.

5. Screen reader testing: iOS VoiceOver and Android TalkBack read Arabic text — but alt text for images and ARIA labels may be incompletely translated or may contain terms that are technically correct but do not read naturally when spoken aloud. Include at least one screen reader pass in your QA cycle.

6. Search and filtering testing: Interfaces that allow searching Arabic content must handle diacritic normalization (searching «أسامة» and finding results for «اسامة» and «أُسامة») and hamza variants. Whether the search engine normalizes these forms depends on its configuration — confirm the behavior and ensure that any user-facing search guidance strings accurately describe what the engine will and will not match.

A Prompt for Generating a Custom Test Checklist

Use this prompt to generate a project-specific linguistic testing checklist rapidly at the start of any Arabic localization QA cycle:

You are a senior Arabic localization QA specialist. Generate a linguistic testing checklist for the following product: Product type: [mobile app / web app / enterprise dashboard / e-commerce] Platform: [iOS / Android / Web / cross-platform] Key user flows: [list the 3–5 most important user journeys] Special content types: [e.g., prices, dates, user-generated content, legal text, error messages, notifications, onboarding screens] Target market: [Gulf / Levant / Egypt / North Africa / pan-Arab] Known risk areas: [e.g., long product names, dynamic content, mixed LTR/RTL strings] For each item in the checklist, specify: 1. What to test 2. Which screen or flow to test it in 3. What to look for (the failure mode) 4. What counts as a pass Organize by: Static Display Tests → Interaction Tests → Edge Case Tests → Full Journey Tests Flag any Arabic-specific risks based on the product type and market provided.

Run this at project kickoff and share the output with both your localization vendor and your QA team. The checklist creates shared expectations about what linguistic testing covers — and makes gaps visible before testing begins rather than after it ends.

Who Conducts the Linguistic Test

On small projects, the translator conducts testing after delivery and integration into the test environment. This is acceptable if adequate time is allocated and the tester has genuine access to the live build — not a screen-share during a meeting where they cannot interact with the interface directly.

On medium and large projects, linguistic testing is a separate role performed by a linguistic tester who is different from the translator and the reviewer. The reason is straightforward: whoever translated or reviewed the strings carries a memory of what the text is supposed to say. That memory actively impairs their ability to see what actually appears on screen.

One practical recommendation regardless of project size: if the translator is also the tester, require a minimum 24-hour gap between delivery and testing. Temporal distance makes a measurable difference in what a tester can see — strings that looked correct during translation read differently after a night’s sleep.

What’s Next

Article 4 — the final article in this series — addresses terminology infrastructure: how to build and cloud-manage technical glossaries that grow with your product and remain accessible to a distributed team, rather than a term register prepared once and forgotten in a shared drive. (See our article: Building and Cloud-Managing Technical Glossaries for Modern Terminology)

And for the UI constraints that linguistic testing most commonly surfaces — the space, mirroring, and register issues that start as translation decisions and end as rendering defects: (See our article: Arabic UI/UX Localization: RTL, Space Constraints & Cultural Tone)

References

- Savourel, Y. (2001). XML Internationalization and Localization. Sams Publishing.

- GALA — Globalization and Localization Association (2023). Linguistic Testing Best Practices. gala-global.org

- W3C Internationalization Working Group (2024). Strings and Internationalization. w3.org

- Microsoft Globalization (2024). Localization Testing Guidelines. learn.microsoft.com

- Jiménez-Crespo, M. A. (2013). Translation and Web Localization. Routledge.